Sometimes, I do not recognize a trap until I am already in it. Photos in iCloud is one such situation.

When Apple launched iCloud Photo Library in 2014, I was all-in. Not only is it where I store the photos I take on my iPhone, it is where I keep the ones from my digital cameras and my film scans, and everything from my old iPhoto and Aperture libraries. I have culled a bunch of bad photos and I try not to hoard, but it is more-or-less a catalogue of every photo I have taken since mid-2007. I like the idea of a centralized database of my photos, available on all my devices, that is functionally part of my backup strategy.1

But, also, it is large. When I started putting photos in there eleven years ago with a 200 GB plan, I failed to recognize it would become an albatross. iCloud Storage says it is now 1.5 TB and, between the amount of other stuff I have in iCloud and my Family Sharing usage, I have just 82 GB of available space. 2 TB seemed like such a large amount of space until I used 1.9 of it.

Apple’s next iCloud tier is a generous 6 TB, but it costs another $324 per year. I could buy a new 6 TB hard disk annually for that kind of money. While upgrading tiers is, by far, the easiest way to solve this problem, it only kicks that can down that road, the end of which currently has whatever two terabytes’ worth of cans looks like.

A better solution is to recognize I do not need instant access to all 95,000 photos in my library, but iCloud has no room for this kind of nuance. The iCloud syncing preference is either on or off for the entire library.

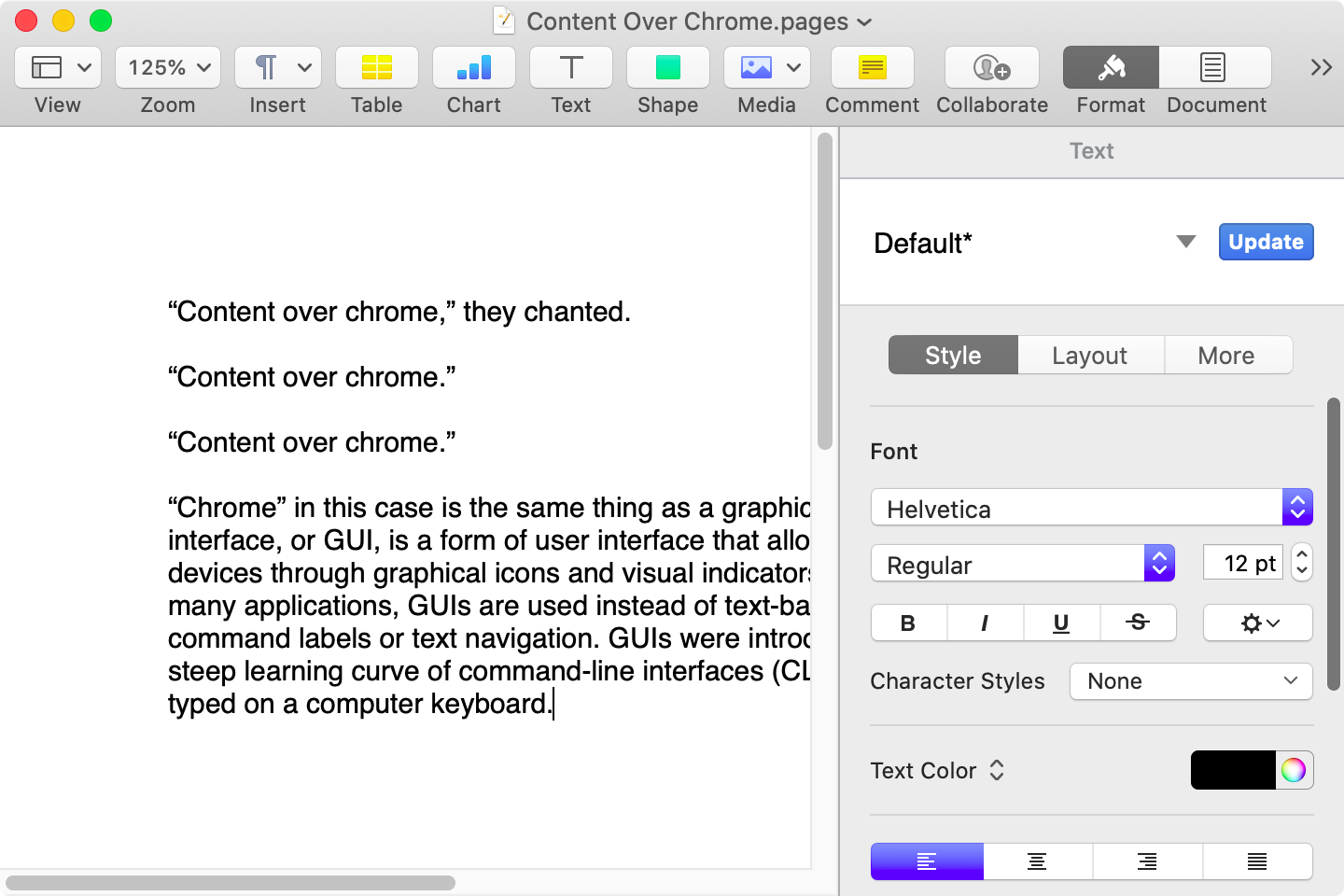

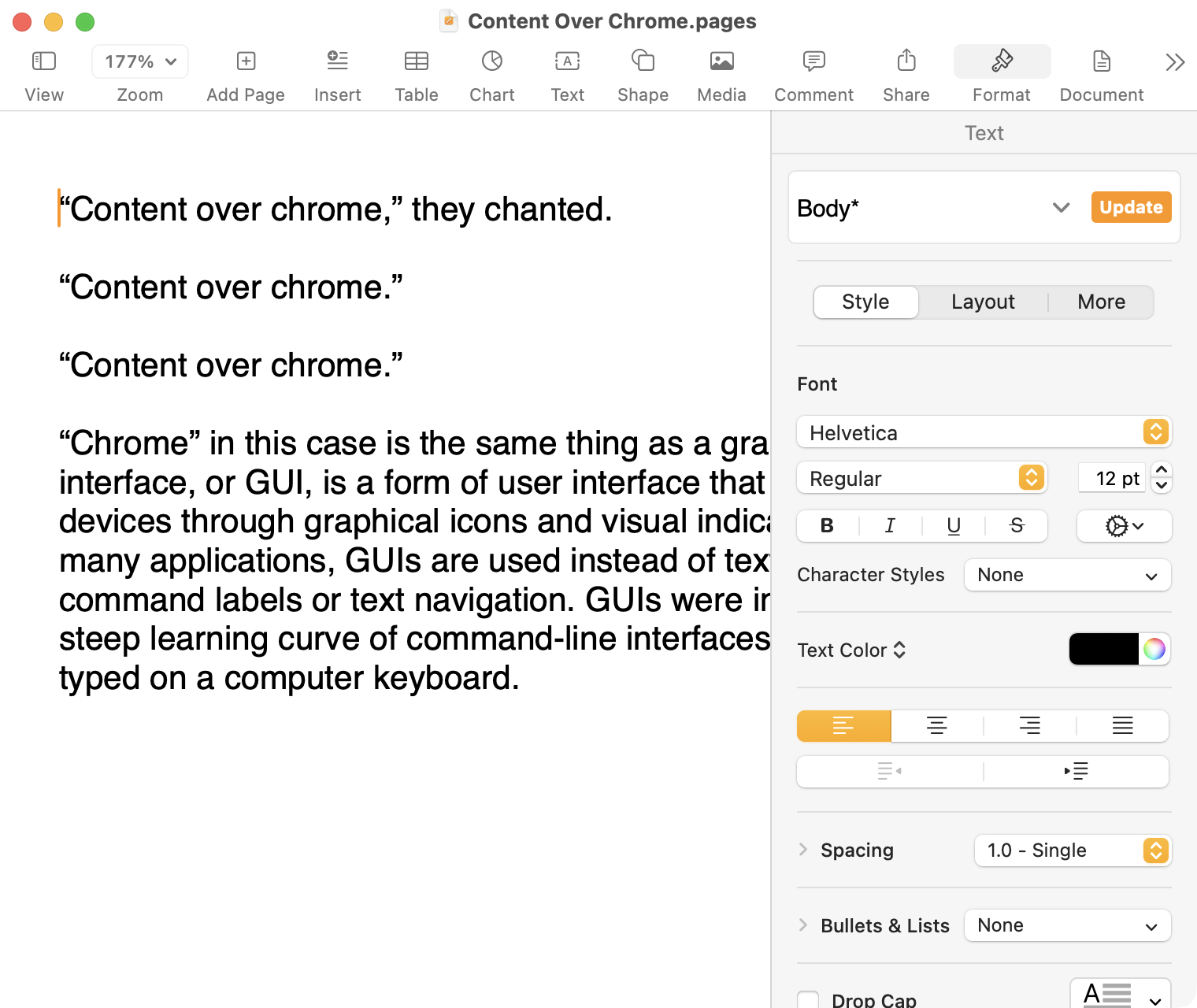

Unfortunately, trying to explain what goes wrong when you try to deviate from Apple’s model of how photo libraries ought to work will become a bit of a rant. And I will preface this by saying this is all using Photos running on MacOS Ventura, which is many years behind the most recent version of MacOS. It is not possible for me to use the latest version of Photos to make these changes because upgraded libraries cannot be opened by older versions of Photos. However, in my defense, I will also note that the version on Ventura is Photos 8.0 and these are the kinds of bugs and omissions inexcusable after that many revisions.

So: the next best thing is to create a separate Photos library — one that will remain unsynced with iCloud. Photos makes this pretty easy by launching while holding the Option (⌥) key. But how does one move images from one library to the other? Photos is a single-window application — you cannot even open different images in new windows, let alone run separate libraries in separate windows. This should be possible, but it is not.

As a workaround, Apple allows you to import images from one Photos library into another — but not if the source library is synced with iCloud. You therefore need to turn off iCloud sync before proceeding, at which point you may discover that iCloud is not as dependable as you might have expected.

I have “Download Originals to this Mac” enabled, which means that Photos should — should — retain a full copy of my library on my local disk. But when I unchecked the “iCloud Photos” box in Settings, I was greeted by a dialog box informing me that I would lose 817 low-resolution local copies, something which should not exist given my settings, though reassuring me that the originals were indeed safe in iCloud. There is no way to know which photos these are nor, therefore, any way to confirm they are actually stored at full resolution in iCloud. I tried all the usual troubleshooting steps. I repaired my library, then attempted to turn off iCloud Photos; now I had 850 low-resolution local copies. I tried a neat trick where you select all the pictures in your library and select “Play Slideshow”, at which point my Mac said it was downloading 733 original images, then I tried turning off iCloud Photos again and was told I would lose around 150 low-resolution copies.

You will note none of these numbers add or resolve correctly. That is, I have learned, pretty standard for Photos. Currently, it says I have 94,529 photos and 898 videos in the “Library” view, but if I select all the items in that view, it says there are a total of 95,433 items selected, which is not the same as 94,529 + 898. It is only a difference of six items but, also, it is an inexplicable difference of six.

At this point, I figured I would assume those 150 photos were probably in iCloud, sacrifice the low-resolution local copies, and prepare for importing into the second non-synced library I had created. So I did that, switched libraries, and selected my main library for import. You might think reading one Photos library from another stored on the same SSD would be pretty quick. Yes, there are over 95,000 items and they all have associated thumbnails, but it takes only a beat to load the library from scratch in Photos.

It took over thirty minutes.

After I patiently waited that out, I selected a batch of photos from a specific event and chose to import them into an album, so they stay categorized. Oh, that is right — just because you are importing across Photos libraries, that does not mean the structure will be retained. There is no way, as far as I can tell, to keep the same albums across libraries; you need to rebuild them.

After those finished importing, I pulled up my main library again to do the next event. You might expect it to retain some memory of the import source I had only just accessed. No — it took another thirty minutes to load. It does this every time I want to import media from my main library. It is not like that library is changing; it is no longer synced with iCloud, remember. It just treats every time it is opened as the first time.

And it was at this point I realized the importer did not display my library in an organized or logical fashion. I had expected it to be sorted old-to-new since that is how Photos says it is displayed, but I saw photos from many different years all jumbled together. It is almost in order, at times, but then I would notice sequential photos scattered all over.

My guess — and this is only a guess — is that it sub-orders by album, but does no further sorting after that. This is a problem for me given a quirk in my organizational structure. In addition to albums for different events, I have smart albums for each of my cameras and each of my iPhone’s individual lenses. But that still does not excuse the importer’s inability to sort old-to-new. The event I spotted early on and was able to import was basically a fluke. If I continued using this cross-library importing strategy, I would not be able to keep track of which photos I could remove from my main library.

There is another option, which is to export a selection of unmodified originals from my primary library to a folder on disk, and then switch libraries, and import them. This is an imperfect solution. Most obviously, it requires a healthy amount of spare disk space, enough to store the selected set of photos thrice, at least temporarily: once in the primary library, once in the folder, and once in the new library. It also means any adjustments made using the Photos app will be discarded — but, then again, importing directly from the library only copies the edited version of a photo without any of its history or adjustments preserved.

What I would not do, under any circumstance — and what I would strongly recommend anyone avoiding — is to use the Export Photos option. This will produce a bunch of lossy-compressed photos, and you do not want that.

Anyway, on my first attempt of trying the export-originals-then-import process, I exported the 20,528 oldest photos in my library to a folder. Then I switched to the archive library I had created, and imported that same folder. After it was complete, Photos said it had imported 17,848 items, a difference of nearly 3,000 photos. To answer your question: no, I have no idea why, or which ones, or what happened here.

This sucks. And it particularly sucks because most data is at least kind of important, but photos are really important, and I cannot trust this application to handle them.

There is this quote that has stuck with me for nearly twenty years, from Scott Forstall’s introduction to Time Machine (31:30) at WWDC 2006. Maybe it is the message itself or maybe it is the perfectly timed voice crack on the word “awful”, but this resonated with me:

When I look on my Mac, I find these pictures of my kids that, to me, are absolutely priceless. And in fact, I have thousands of these photos.

If I were to lose a single one of these photos, it would be awful. But if I were to lose all of these photos because my hard drive died, I’d be devastated. I never, ever want to lose these photos.

I have this library stored locally and backed up, or at least I though I did. I thought I could trust iCloud to be an extra layer of insurance. What I am now realizing is that iCloud may, in fact, be a liability. The simple fact is that I have no idea the state my photos library is currently in: which photos I have in full resolution locally, which ones are low-resolution with iCloud originals, and which ones have possibly been lost.

The kindest and least cynical interpretation of the state of iCloud Photos is that Apple does not care nearly enough about this “absolutely priceless” data. (A more cynical explanation is, of course, that services revenue has compromised Apple’s standards.) Many of these photos are, in fact, priceless to me, which is why I am questioning whether I want iCloud involved at all. I certainly have no reason to give Apple more money each month to keep wrecking my library.

I will need to dedicate real, significant time to minimizing my iCloud dependence. I will need to check and re-check everything I do as best I can, while recognizing the difficulty I will have in doing so with the limited information I have in my iCloud account. This is undeniably frustrating. I am glad I caught this, however, as I sure had not previously thought nearly as much as I should have about the integrity of my library. Now, I am correcting for it. I hope it is not too late.